What CrashLoopBackOff Actually Means

Kubernetes troubleshooting keeps getting worse, not better with all the conflicting advice flying around. So let me cut straight to it. CrashLoopBackOff means one thing: your container keeps dying on startup, and Kubernetes keeps trying to revive it. The backoff timer grows each time — 10 seconds, then 20, then 40, eventually hitting 5 minutes — giving the container more breathing room between attempts.

But what is CrashLoopBackOff, really? In essence, it’s Kubernetes doing exactly what it’s supposed to do. But it’s much more than that. It’s a signal. Your application code, your configuration, or something in your environment is broken. The orchestrator isn’t the problem. I spent an embarrassing amount of time blaming Kubernetes early in my career before I internalized that.

Fixing this means figuring out why the container exits the moment it starts — not poking at cluster settings.

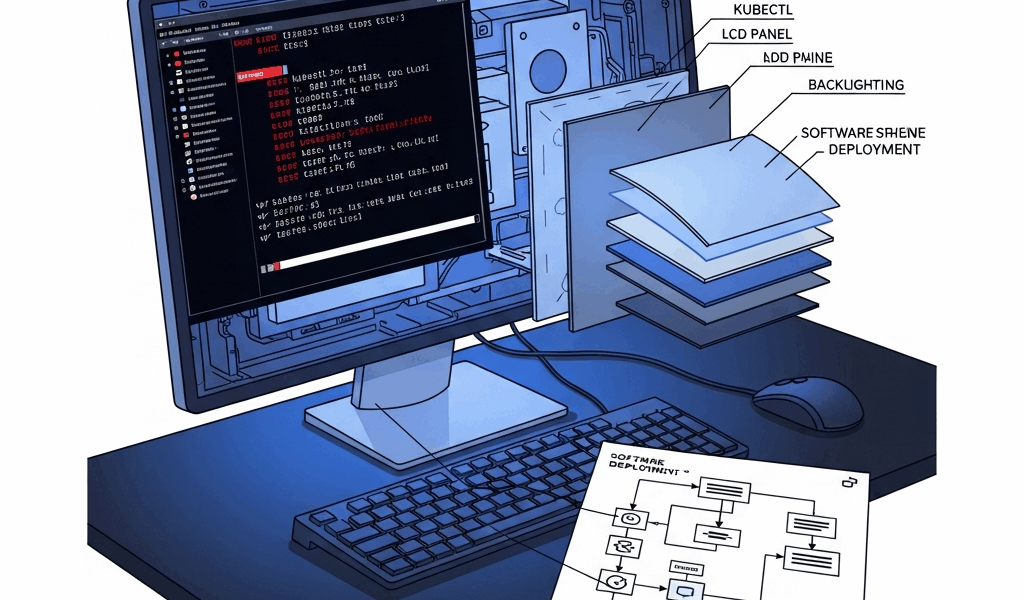

Run These Commands First

As someone who’s stared down more failing pods than I’d like to admit, I learned everything there is to know about wasted debugging time. Today, I will share it all with you.

Faced with a crashing pod, I used to jump straight to logs and lose fifteen minutes chasing phantom errors. Now I follow a three-command sequence. Two minutes, maybe. Tells me almost everything.

So

Step 1: List the Pod and Check Restart Count

kubectl get pods -n defaultOutput looks like this:

NAME READY STATUS RESTARTS AGE

my-app-5d4f7c8b9e 0/1 CrashLoopBackOff 7 4m22s

other-pod-xyz 1/1 Running 0 2dThe RESTARTS column is your first clue. Seven restarts means this isn’t a fluke — something is consistently broken. STATUS confirms where you are. AGE tells you when the failure started relative to your last deployment. High restart counts narrow the diagnosis fast.

Step 2: Describe the Pod for Event Details

kubectl describe pod my-app-5d4f7c8b9e -n defaultScroll to the Events section at the bottom. You’ll see output roughly like this:

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 4m18s default-scheduler Successfully assigned default/my-app-5d4f7c8b9e to node-2

Normal Pulling 4m17s kubelet Pulling image "myregistry/app:v1.2"

Normal Pulled 4m15s kubelet Successfully pulled image

Normal Created 4m14s kubelet Created container app

Normal Started 4m14s kubelet Started container app

Warning BackOff 2m10s (x12 over 4m) kubelet Back-off restarting failed containerLook hard at what appears between Started and BackOff. Image pull failures show up here. Permission errors. OOM kills. This single step resolves roughly 30% of cases before you ever open a log file.

Step 3: Read the Previous Container’s Logs

kubectl logs my-app-5d4f7c8b9e -n default --previousThe --previous flag is the part people miss. After a restart, the current container instance has nothing useful. You need logs from the crashed one — the dead version. Output might look like:

Starting application...

Connecting to database at localhost:5432

panic: dial tcp: lookup localhost: no such host

goroutine 1 [running]:

main.main()

/app/main.go:42 +0x5a8

There it is. That’s what killed the process. Keep this output open — you’ll need it for everything that follows.

The 6 Root Causes and How to Spot Each One

1. Application Error or Panic on Startup (40% of cases)

Your code is broken. It crashes instantly — sometimes within two or three seconds of starting. You’ll see panic traces, unhandled exceptions, or interpreter warnings in kubectl logs --previous. Stack traces. File not found errors. That kind of thing.

The fix is straightforward, even if it’s painful: replicate it locally. Run docker run myimage:tag with the same environment variables and watch it fail identically. Then fix the code, rebuild, redeploy. That’s it. The most common lesson production teaches you, honestly — and it teaches it repeatedly until it sticks.

2. Bad or Missing Environment Variables (25% of cases)

The app expects an environment variable that simply isn’t there, and rather than defaulting gracefully, it crashes. Logs show something like “undefined variable: DATABASE_URL” — sometimes even cleaner than that. Check your deployment YAML and look under env: in the container spec. Compare what’s set against what your application actually requires.

Missing something like LOG_LEVEL or API_KEY is faster to fix than to fully debug. Add the variable under spec.containers[0].env, apply the updated YAML, and redeploy. Done in under five minutes if you catch it early.

3. Liveness Probe Misconfiguration (15% of cases)

A liveness probe fires before the application finishes initializing. The probe fails. Kubernetes assumes the container is dead and kills it. Logs show nothing obviously wrong — the app looks healthy — but it keeps getting terminated around the 10-second mark. This one trips people up badly.

It deserves its own section below, so I’ll leave it there. Don’t skip that part.

4. OOMKilled at Launch (12% of cases)

The container blows past its memory limit during startup — before it’s even serving traffic. Logs disappear or show “OOMKilled” under Last State in kubectl describe pod. The memory limit is simply too tight for what the app needs to initialize.

Increase both the request and limit in your deployment spec: try resources.requests.memory: 512Mi and resources.limits.memory: 1Gi as a starting point. Redeploy and watch. If memory keeps climbing after stabilization, you’ve got a leak — that’s a separate problem worth addressing deliberately, not by raising limits forever.

5. Image Pull Failure (5% of cases)

The image doesn’t exist or sits behind a private registry with no credentials configured. kubectl describe pod shows “ImagePullBackOff” — sometimes appearing before CrashLoopBackOff kicks in. Logs are empty because the container never actually started.

Verify the tag: run kubectl describe pod | grep Image and look closely. Typos like pythoon:3.9 instead of python:3.9 happen more than anyone admits. For private registries, confirm your pull secret is referenced under imagePullSecrets in the deployment spec.

6. Config or Secret Mount Missing (3% of cases)

The app expects a config file, certificate, or secret at a specific path — say, /etc/config/app.conf — and it simply isn’t there. Logs say exactly that: “open /etc/config/app.conf: no such file or directory.”

Check the volumeMounts section of your deployment against the volumes definition. Then verify the underlying ConfigMap or Secret actually exists: kubectl get configmaps and kubectl get secrets. Mismatched names between the volume declaration and the actual resource are the culprit almost every time.

Fix Liveness and Readiness Probes — Getting It Wrong

Skipping ahead to the part you want. Probe misconfiguration is the most misdiagnosed CrashLoopBackOff cause out there — because the application logs look completely fine and Kubernetes appears to be killing a healthy container for no reason.

Here’s what’s actually happening: your liveness probe starts too early. Say your app needs 30 seconds to initialize its database connection pool. The probe fires at 10 seconds, sees no response at /health, and marks it failed. Three failures later — 30 seconds total — Kubernetes kills the container. The cycle starts over. CrashLoopBackOff.

Before (broken YAML):

livenessProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 5

periodSeconds: 10

failureThreshold: 3Five seconds. That’s nowhere near enough for a real application to boot, initialize connections, and start serving a health endpoint. I’m apparently a slow learner on this one — initialDelaySeconds: 5 burned me twice in staging before I stopped using it entirely as a default.

After (fixed YAML):

livenessProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 45

periodSeconds: 10

failureThreshold: 3Forty-five seconds. The probe doesn’t start checking until the app has had real time to come up. This isn’t a guess — measure it. Start the container locally, time how long until /health actually responds, then add 10 to 15 seconds as a buffer. That number becomes your initialDelaySeconds. Don’t make my mistake of picking something that feels reasonable without measuring.

Use a readinessProbe to gate traffic and a liveness probe only to catch stuck processes — deadlocks, infinite loops, that kind of thing. A readiness probe can fail without killing the container. A liveness probe cannot. That’s what makes the distinction so critical to us Kubernetes operators. Most crashes I’ve seen trace back to someone setting liveness too aggressively, or confusing the two probe types entirely.

When the Fix Works and How to Confirm

After applying your fix, watch the pod in real time:

kubectl get pods -w -n defaultYou’ll see it cycling — CrashLoopBackOff, then ContainerCreating, then Running, then possibly back to CrashLoopBackOff if the fix missed the mark. A successful recovery looks like: pod enters Running and stays there. RESTARTS stops climbing. READY shows 1/1.

Stabilizes immediately? Fix worked. Still restarting after 30 seconds? Either you missed something or the new logs are showing a different error entirely. Run kubectl logs --previous again. Re-diagnose fresh — sometimes fixing one issue exposes a second one that was hiding behind it.

For anything critical, add a PodDisruptionBudget so Kubernetes doesn’t evict the pod during node maintenance at the worst possible moment:

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: my-app-pdb

spec:

minAvailable: 1

selector:

matchLabels:

app: my-appOne last thing. After the pod stabilizes, check your resource requests against actual usage: kubectl top pods. If the crash was memory-related, raising the limit without fixing the underlying leak just delays the next incident — could be hours, could be days. Fix the root cause first. Then review your Horizontal Pod Autoscaler settings and scale deliberately. Cascading restarts near node capacity are their own category of miserable, and they’re almost always avoidable.

Leave a Reply