What NotReady Actually Means

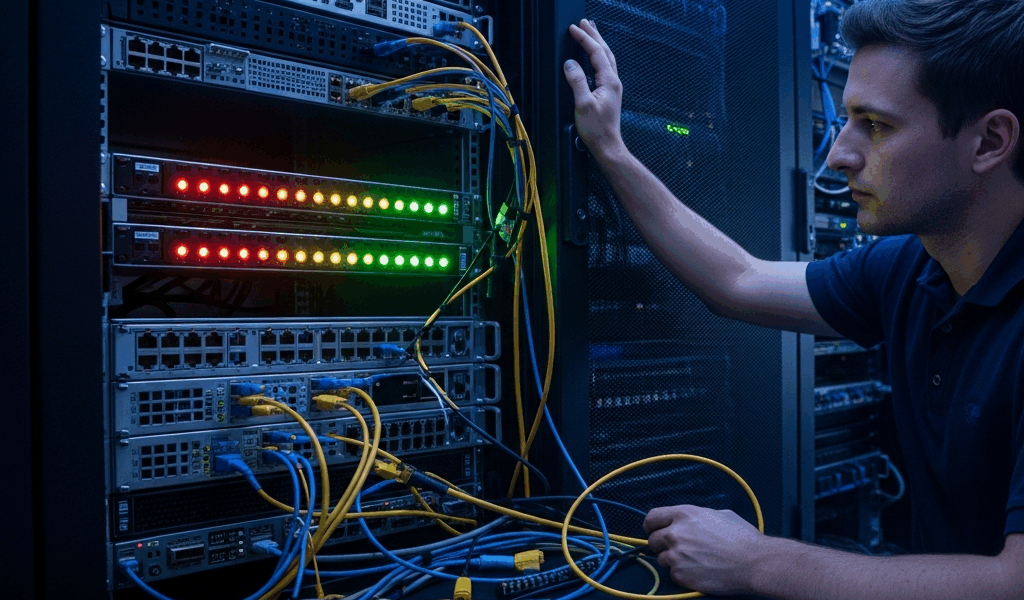

Kubernetes node troubleshooting stopped being simple a long time ago with all the conflicting advice flying around. As someone who has debugged NotReady nodes at 2am more times than I’d like to admit, I learned everything there is to know about what actually causes this — and what fixes it. Today, I will share it all with you.

But what is a NotReady node? In essence, it’s a node that has stopped reporting its health to the control plane or is failing internal health checks. But it’s much more than that. When you run kubectl get nodes, you’ll see NotReady in the STATUS column instead of Ready. The node itself is usually still running — the kubelet just isn’t communicating properly with the API server, or the node has detected a resource problem it can’t resolve on its own.

Think of it as a signal that something broke in the last few minutes. The network plugin crashed. The disk filled up. A certificate expired while the node was offline. Whatever happened, your pods aren’t scheduling there. Existing pods are either being evicted or sitting in a pending state going nowhere.

That’s what makes diagnosing NotReady so frustrating to us cluster operators — the symptom is clear, the cause is almost never obvious.

Start Here — Check Kubelet Status First

Pausing for the bit that actually matters. SSH into the affected node immediately. The kubelet is the agent on each node that talks to the control plane — if it’s dead or stuck, the node will be NotReady. Full stop.

Run this command:

systemctl status kubeletIf kubelet shows active (running) in green, move on. If it shows inactive (dead) or failed, pull the logs right now:

journalctl -u kubelet --no-pager -n 50That gives you the last 50 lines. Read them bottom to top. You’re hunting for patterns.

Three Common Kubelet Failure Patterns

Pattern 1: CNI plugin not initialized. You’ll see something like “Failed to initialize CNI: error reading the config file” or “no networks found in /etc/cni/net.d.” The network plugin — Calico, Flannel, Weave, take your pick — hasn’t set up networking on this node yet. The kubelet refuses to proceed until it can. Hard stop.

Pattern 2: Certificate errors. Look for “x509: certificate has expired” or “unable to load in-cluster configuration.” Almost always a kubelet client certificate that expired while you weren’t looking. There’s a dedicated section below covering the fix.

Pattern 3: Systemd resource limits. Less common — but brutal when it hits. You’ll see “Failed to move pod sandbox to systemd cgroup” or “systemd resource controller not available.” I’m apparently the type who skips reading systemd unit files carefully, and that approach never works for me on GKE nodes. Spent two hours chasing this exact issue before noticing the unit had MemoryLimit=512M baked right into it. Don’t make my mistake. Check your cgroup v2 config and kubelet memory limits before assuming something exotic is wrong.

If kubelet shows active (running) but errors are still appearing in the logs, the process is alive and struggling — which is actually progress. You can safely restart it.

Healthy logs look like this: kubelet version printed, node registration succeeding, periodic status updates going to the API server. No certificate errors, no CNI complaints. If everything looks clean, restart anyway as a sanity check:

systemctl restart kubeletWait 30 seconds. Then check node status from your control plane:

kubectl get nodesNode moved to Ready? Done. Still NotReady? Keep going.

Diagnose Network Plugin and CNI Failures

The Container Network Interface plugin handles IP assignment for pods and sets up the networking overlay. No CNI, no node initialization. The node stays NotReady until that gets resolved.

Check CNI pods in the kube-system namespace — Calico uses calico-node, Flannel uses kube-flannel, and so on. Run:

kubectl get pods -n kube-system | grep -i cniOr go broader:

kubectl get pods -n kube-system -o wideFind the CNI pod running on your NotReady node. If it’s sitting in CrashLoopBackOff, ImagePullBackOff, or Pending, the CNI itself is broken. Pull its logs:

kubectl logs -n kube-system --tail=30 Common errors: “config file not found in /etc/cni/net.d” or permission failures. On the affected node directly, verify the CNI config directory has actual content:

ls -la /etc/cni/net.d/You need at least one .conf or .conflist file sitting there. Empty directory means incomplete installation. Reinstall the plugin. For Calico:

kubectl apply -f https://docs.projectcalico.org/manifests/tigera-operator.yamlFor Flannel:

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.ymlGive it 60 seconds for pods to spin up, then recheck node status. A kubelet restart on the affected node sometimes nudges things along too:

systemctl restart kubeletCheck for Disk Pressure, Memory Pressure, and PID Pressure

From your control plane, run:

kubectl describe node Scroll to the Conditions section. You want to see this:

Conditions:

Type Status LastHeartbeatTime

---- ------ -----------------

MemoryPressure False

DiskPressure False

PIDPressure False

Ready True

Any of those pressure conditions showing True means the node is reporting resource exhaustion — and the kubelet is deliberately marking it NotReady to stop new pods from scheduling there. It’s doing you a favor, even if it doesn’t feel that way at 11pm.

SSH into the node and check disk usage directly:

df -hRoot filesystem or /var above 85%? That’s your culprit. Container logs pile up in /var/lib/docker/containers or /var/lib/containerd depending on your runtime. Clean house:

docker system prune -a --volumes -fOr for containerd:

crictl rmi --pruneThen check memory:

free -mMemory pressure is less common on nodes than disk — but worth a look. For PID pressure, check the system limit:

cat /proc/sys/kernel/pid_maxThen compare against current PID count:

ps aux | wc -lHitting PID limits repeatedly points to a process leak. Zombie process accumulation, usually. That means investigating which deployment is spawning processes without cleaning them up — a different problem worth its own debugging session.

Once you’ve freed space or memory, the kubelet notices within the next status update cycle — default is 10 seconds. The node should transition to Ready on its own. If it doesn’t:

systemctl restart kubeletFix Expired Node Certificates

Frustrated by a node that looks perfectly healthy but still won’t rejoin the cluster, many engineers eventually discover the real culprit: an expired certificate hiding in plain sight. A node goes offline for maintenance. While it’s down, the cluster rotates its certificates automatically — standard behavior on most distributions. The node comes back up. Its kubelet client certificate is now expired. It can’t authenticate to the API server. NotReady.

From your control plane, check expiry dates:

kubeadm certs check-expirationLook for kubelet-server or anything in the system:nodes group. Expiry date in the past means it’s expired. Renew everything:

kubeadm certs renew allThat regenerates certificates on the control plane. But the affected node still has the old certificate cached locally — which is why you also need to restart kubelet on that node specifically:

ssh

systemctl restart kubelet The kubelet will request a fresh certificate from the control plane during startup. Give it 15 to 30 seconds. Then verify:

kubectl get nodesBefore doing any of this in production — drain the node first. Migrate its pods safely:

kubectl drain --ignore-daemonsets --delete-emptydir-data After the kubelet restarts and the node rejoins, uncordon it:

kubectl uncordon This is the safest path under pressure — at least if you want to avoid disrupting running workloads while you’re mid-fix. It gives you breathing room to confirm the certificate issue is actually resolved before resuming scheduling.

Leave a Reply