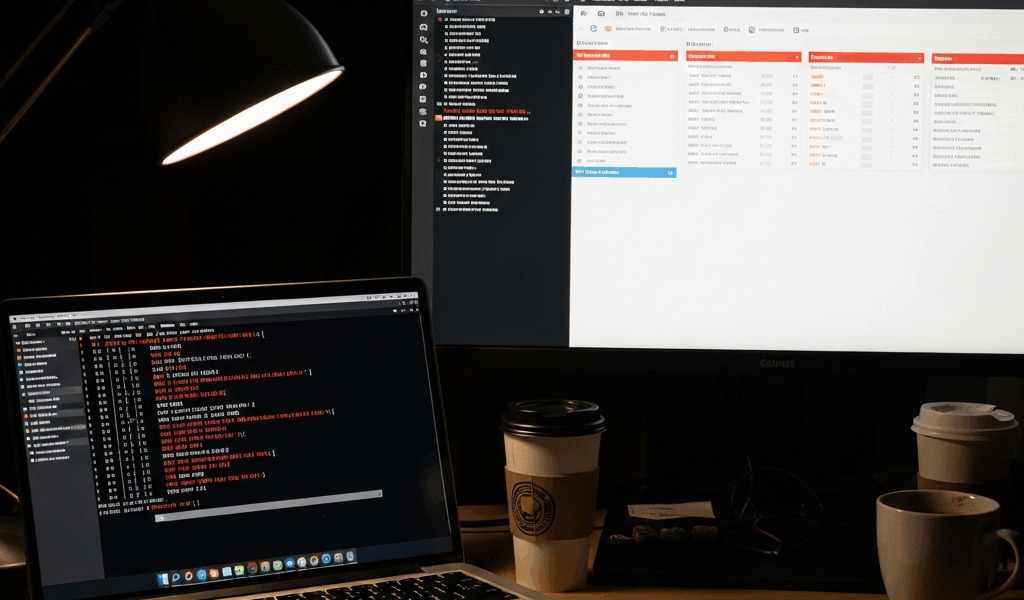

Docker Container Won’t Start — Debugging This Mess Step by Step

Docker troubleshooting has become exhausting to keep up with all the conflicting advice flying around. Restart the daemon. Nuke the image. Rebuild from scratch. Meanwhile, your deployment pipeline is dead and someone’s about to get a very unpleasant Slack message.

As someone who has broken production containers at truly unreasonable hours, I learned everything there is to know about container startup failures — mostly by making every mistake personally. Today, I will share it all with you.

The short version: most failures follow maybe four or five predictable patterns. You don’t need deep kernel knowledge or a $300 observability platform. You need a systematic approach and the discipline to check the obvious thing first.

So

Check the Logs First — docker logs Container_ID

Real talk: this is the important bit. This single command surfaces 90% of container startup issues — yet I still watch engineers go straight to Docker daemon logs, kernel messages, and cgroup resource monitors before ever running the obvious thing.

When a container fails to start, your first command should be this:

docker logs <container_id_or_name>Container already exited? Add --tail to pull the last N lines before they scroll away:

docker logs --tail 50 <container_id>Container is technically running but acting strange? Follow it live:

docker logs -f <container_id>What to look for in the output

Once you have the logs open, scan for these patterns. I learned this the hard way — three hours debugging a “crashed” container that was missing exactly one environment variable. Three hours. Don’t make my mistake.

- FileNotFoundError or No such file or directory — Configuration file missing. Entrypoint script not mounted. Dependency path wrong.

- Connection refused — Service trying to connect to localhost. Database not accessible. Wrong hostname.

- Permission denied — Running as wrong user. File permissions on mounted volumes. Container can’t read or write.

- Module not found or package not found — Missing dependency in image. Wrong Python path. npm packages not installed.

- Address already in use — Port conflict with another container. Previous container didn’t fully shut down.

Checking logs first eliminates roughly 70% of false starts. That’s not a small number when it’s 2 AM and the on-call rotation is one phone call away.

Exit Code 1 — Application Error

Exit code 1 is about as generic as it gets. Your application crashed. The logs will tell you the actual reason — but here are the culprits I keep seeing in production, over and over again.

Missing environment variables

Your application expects DATABASE_URL, API_KEY, or LOG_LEVEL to exist. It doesn’t. The container initializes, hits a null reference somewhere in the startup sequence, and dies immediately — often without a particularly helpful error message.

Check what variables are actually present inside the running container:

docker exec <container_id> envCompare that output to what your application actually needs. Three ways to fix it: pass variables at runtime, bake them into the image, or mount them from a config file. Most production setups land on environment variable files or Docker secrets:

docker run --env-file.env.production <image>In Kubernetes, ConfigMaps and Secrets handle this. In Docker Compose, use the environment section in your service definition.

Configuration files not mounted

Your app reads from /etc/myapp/config.yaml. That file lives on the host. You forgot to mount it. The application can’t find it and crashes — usually with a FileNotFoundError that’s pretty straightforward once you actually read the logs.

Using Docker directly, use the -v flag:

docker run -v /host/path/config.yaml:/container/path/config.yaml <image>In Docker Compose:

volumes:

-./config.yaml:/etc/myapp/config.yamlIn Kubernetes, mount a ConfigMap or Secret as a volume.

Permission issues

The container runs as a non-root user — good security practice — but that user can’t read mounted files or write to certain directories. Check the Dockerfile to see what user the image specifies. Then verify file ownership on the host actually matches that user’s UID.

For a quick diagnostic, run the container as root temporarily:

docker run --user root <image>If it works as root but not otherwise, you’ve confirmed the permissions problem. Fix it properly — change file ownership on the host or adjust the user inside your Dockerfile with chown during the build.

Missing runtime dependencies

I’m apparently the person who always forgets system libraries, and this pattern works for compiled languages while interpreted ones never catch it until runtime. You have Python installed. Flask is there. But the Dockerfile doesn’t include libpq, so the PostgreSQL driver refuses to load. Or a compiled binary needs a shared library that simply doesn’t exist in the image.

The logs show this clearly. Find what the application is looking for, then add it to the Dockerfile’s RUN command.

Exit Code 137 — Out of Memory

Exit code 137 means the OOM killer got involved. Memory climbed gradually — maybe over hours — and the system decided your container had to go. These failures are annoying because the container appears healthy right up until it doesn’t.

First, confirm the actual cause. Check Docker’s event log:

docker events --filter "type=container" | grep OOMKilledOr inspect the container directly:

docker inspect <container_id> | grep -i oomYou’re looking for "OOMKilled": true.

How to check memory limits

See what limit is currently configured:

docker inspect <container_id> | grep -E "MemoryLimit|MemoryReservation"A value of -1 means no hard limit. Something like 512MB for a Java service is almost certainly your problem.

Watch actual usage in real-time:

docker stats <container_id>That shows current memory consumption, percentage of the configured limit, and whether you’re approaching the ceiling.

Increasing container memory

Set a higher limit when starting the container:

docker run -m 2g <image>That allocates 2 gigabytes. You can separate soft and hard limits too:

docker run -m 4g --memory-reservation 2g <image>In Docker Compose:

services:

myapp:

image: myapp:latest

deploy:

resources:

limits:

memory: 4G

reservations:

memory: 2GBut increasing the limit is a Band-Aid — at least if you haven’t identified why memory is climbing. The real fix is tracking down the leak in application code. Memory leaks compound. They will eventually exhaust even generous limits.

Exit Code 127 — Command Not Found

The container tries to execute something that doesn’t exist. The entrypoint script, a binary, a shell command. Exit code 127 is unmistakable. The system literally cannot find what you told it to run.

Wrong entrypoint configuration

Your Dockerfile specifies an entrypoint that isn’t present in the final image. Frustrated by an inexplicably failing multi-stage Go build one afternoon, I eventually discovered I’d been copying the entrypoint script into the wrong stage entirely — using a perfectly valid COPY instruction pointing at the wrong source.

Verify what’s actually inside the image:

docker run --rm <image> ls -la /appCheck the Dockerfile’s ENTRYPOINT and CMD directives. Confirm the file path is correct and the file is there.

Binary not in PATH

Your application references something like redis-cli or curl. It’s not installed, or it’s not on the system PATH.

docker run --rm <image> which curlNo output means it’s not available. Add it to the Dockerfile via your package manager.

Multi-stage build issues

But what is a multi-stage build problem, exactly? In essence, it’s when you copy artifacts between build stages but accidentally skip something critical — an entrypoint script, a shared library, a necessary binary. But it’s much more than that — it’s the whole category of “the image built successfully but is still broken.”

Double-check every COPY instruction. Confirm the correct source stage. Confirm the destination path.

Container Starts and Immediately Exits

The container runs. Then it stops. No error in the logs. This is usually a process management problem — not an application crash at all.

No foreground process running

Docker containers need a foreground process. That process exits, the container stops. That’s the design — the container lifecycle is tied directly to your main application process.

Daemons like Nginx and Apache fork to the background by default. The main process exits immediately. The container sees that and shuts down.

Fix it by running the daemon in foreground mode. For Nginx: nginx -g "daemon off;". For Apache: apache2ctl -D FOREGROUND. Check your application’s documentation for the equivalent flag.

CMD vs ENTRYPOINT confusion

Both CMD and ENTRYPOINT define what runs at container startup — but they behave differently. ENTRYPOINT sets the main executable. CMD provides default arguments, or acts as the main command when no ENTRYPOINT is specified.

When both are present, ENTRYPOINT runs and CMD gets passed as arguments. Only CMD present? It runs directly.

Structure it like this in your Dockerfile:

ENTRYPOINT ["python", "app.py"]

CMD ["--port", "8000"]That makes it easy to override arguments at runtime without touching the entrypoint itself.

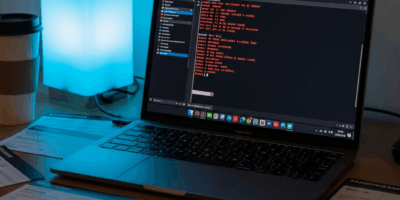

The tail -f /dev/null debugging trick

Application exits immediately and you need to poke around inside the container? Override the entrypoint temporarily:

docker run --entrypoint tail <image> -f /dev/nullContainer stays alive. Now exec in and test things by hand:

docker exec -it <container_id> /bin/bashRun your application’s startup command manually. Watch exactly where it fails. That’s what makes this trick endearing to us debugging engineers — it surfaces edge cases that never appear in automated logs, ever.

This new approach to container debugging — check logs first, match exit codes to causes, fix root problems not symptoms — took off in the DevOps community several years ago and eventually evolved into the systematic methodology practitioners know and rely on today.

Check logs first. Match exit codes to their specific causes. Fix the root problem. Your future self at 2 AM will genuinely thank you.

Leave a Reply