Cold Starts Explained in 30 Seconds

As someone who spent three years elbow-deep in Lambda functions across production workloads, I learned everything there is to know about AWS cold start frustration. You deploy a function. First invocation crawls in at 2 seconds. The next hundred zip by at 50 milliseconds. That gap—that’s your cold start, and it’ll drive you absolutely insane if you let it.

But what is a cold start? In essence, it’s the initialization tax Lambda charges on fresh container spins. But it’s much more than that. When Lambda receives your invocation, it spins up a container, grabs your code, initializes the runtime, then finally runs your function. All of that happens in that ugly initial spike. Subsequent invocations reuse the same warm container and skip most of those steps entirely.

Worth saying out loud before I go further. next part, honestly—because I made the mistake of optimizing for entirely the wrong scenario early on, and it cost me a week of my life.

Cold starts genuinely hurt when they’re tied to synchronous operations. An API Gateway request sitting there waiting? Your user stares at a spinner for 2 seconds. Payment processing webhooks? That delay matters. Real-time chat? Yes. But an async event arriving via SQS that processes sometime in the next minute? Nobody cares. A background job running at 2 AM writing to a database that isn’t checking the clock? The cold start is invisible—which is exactly what I discovered after spending a week optimizing a scheduled Lambda that ran once daily. Saved 800 milliseconds. Zero humans noticed.

Timing varies wildly depending on runtime. Java cold starts land somewhere between 500–3000 milliseconds. Python and Node.js typically settle in around 100–800 milliseconds. Go is stupid fast—sub-100 milliseconds, usually. Memory allocation plays into it too. More memory means faster CPU, which means faster initialization—a counterintuitive little quirk that trips people up constantly.

Provisioned Concurrency — The Nuclear Option

Provisioned Concurrency is AWS’s blunt instrument for cold start elimination. You tell Lambda to keep 10 warm containers running at all times, and those containers stay initialized and ready. Every invocation hits a warm container. Cold starts disappear completely—no asterisks, no caveats.

The cost is straightforward and painful. Provisioned Concurrency runs roughly $0.015 per hour per unit. One unit equals one concurrent execution. Ten units running around the clock costs about $3.60 per day—$108 per month. That stings when your function barely gets traffic.

That’s what makes this decision so critical for us Lambda practitioners. I’ve watched teams provision 50 units for a function getting three requests daily. That’s $1,620 monthly for a problem that doesn’t exist. Don’t make my mistake—or theirs.

When is it actually worth it? When your function serves real user traffic. When response time directly moves your conversion needle. When you’re handling API requests that need sub-500-millisecond responses. The mental math isn’t complicated: if avoiding 100 cold starts monthly saves your users 100 seconds of frustration, and Provisioned Concurrency runs $108, you’re paying about $1.08 per prevented cold start. Your product decides if that’s reasonable.

Auto-scaling works here too. Pair reserved concurrency with provisioned concurrency—set 10 provisioned, 100 reserved—and you get 10 permanently warm containers plus burst capacity up to 100 total concurrent executions. Beyond that ceiling, new invocations queue and might fail. Plan accordingly.

Code-Level Optimizations That Cut Cold Start by 50%

Before you spend a single dollar on Provisioned Concurrency, optimize your code. I typically see 40–60% cold start reduction from these practices alone, and they cost nothing but an afternoon.

Lazy Loading and Imports

Every import statement executing during initialization adds milliseconds—and Python developers feel this pain more than anyone. A 50-megabyte library imported at the top level means every cold start waits for that entire library to load, whether you need it or not.

Move imports inside your handler function. Import only what that specific invocation actually uses. A database library? Inside the handler. A utility you call in 5% of invocations? Import it conditionally, right where you need it.

This single change took one of my handlers from 580 milliseconds down to 340 milliseconds on cold start—a Python-to-MongoDB handler where pymongo, the connection setup, and credential initialization all lived at module level. Moved them inside the function. Subsequent warm invocations saw zero performance change because Python had already cached the loaded module. Warm invocations don’t re-import. Cold starts do. That’s the whole game.

Smaller Deployment Packages

Lambda downloads your code on cold start. Smaller code means faster downloads—which sounds obvious until you actually audit your dependency tree and find a machine learning library you abandoned six months ago still sitting there, downloaded and initialized on every single cold start.

That’s exactly what happened on one handler I worked on. The dependency was listed but completely unused—just forgotten. Removing it cut the deployment package from 87 megabytes to 12 megabytes. Cold start dropped from 1,200 milliseconds to 400 milliseconds. Eight hundred milliseconds, gone, because nobody ran pip list in half a year.

Tree-shaking handles this systematically. If you import a 200-function utility library but only call three of them, tree-shaking strips out the other 197 during bundling. JavaScript bundlers like esbuild and Webpack do this automatically. Python projects using Poetry can trim dependencies similarly. Java developers can reach for ProGuard or R8.

Lambda Layers help here too—especially across teams. If ten functions all need the same logging library, create one Layer and reference it everywhere. You’re not re-downloading identical code ten times. Cold starts stay lean because the shared dependencies live outside your individual packages.

Runtime-Specific Optimizations

Python: Avoid importing all of boto3 when you only need S3. Use boto3.client instead of the resource interface—it initializes faster. The pandas import alone chews through 200+ milliseconds. Lazy-load it wherever possible, and your cold starts will thank you.

Node.js: Bundle and minify with esbuild or Webpack. Pull console.log calls out of production code—they add overhead that accumulates. Keep your handler function thin, pushing heavy logic into imported modules that persist across warm invocations.

Java: This is where cold start times get genuinely ugly. GraalVM native image compilation helps substantially, though the build complexity is real. Standard Java Lambda cold starts run 1–3 seconds, and even with solid optimization, beating 500 milliseconds without special tooling is difficult. Which brings us to the next section.

Connection pooling deserves a specific callout. Create your database connections outside the handler function—at module level. That connection object persists across warm invocations. One connection created on cold start, reused on every warm invocation after. Database-heavy functions see meaningful cold start reductions from this pattern alone.

SnapStart for Java — The Game Changer

SnapStart is Java-specific and honestly should be more widely talked about, because it genuinely transforms what’s possible with Java on Lambda.

But what is SnapStart? In essence, it’s AWS initializing your Java function and taking a memory snapshot before your handler runs. But it’s much more than that. When you invoke the function, Lambda restores from that snapshot instead of bootstrapping the JVM from scratch. Cold starts drop from 2,000+ milliseconds to somewhere between 200–400 milliseconds. Sometimes lower.

The snapshot captures your post-initialization memory state—JVM startup done, classes loaded, your initialization code complete. Subsequent invocations skip all of that and restore directly to the ready state. The implementation complexity lives on AWS’s side. Your side is three configuration steps: enable SnapStart on your function version, publish a new version after deployment, then point your API Gateway or event source to that specific version rather than $LATEST.

One team I worked with had a Java payment processing function cold starting at 2,400 milliseconds—dangerously close to their 3-second webhook timeout. SnapStart brought it to 350 milliseconds. The business impact was immediate and obvious. Comfortable headroom where there had been anxiety.

One caveat worth understanding: database connections initialized during your initialization code get frozen into the snapshot. When Lambda restores from that snapshot, you’re holding a stale connection object. You need to detect the restore event and re-establish connections explicitly. Spring Boot handles this automatically now—custom code needs explicit handling. Don’t skip this step or you’ll debug some very confusing connection failures at 3 AM.

Architecture Patterns That Avoid Cold Starts Entirely

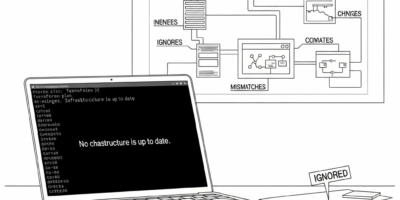

Sometimes the best optimization is architectural. Cold starts have turned into an absolute tangle with all the noise flying around about Provisioned Concurrency and SnapStart—but occasionally the right answer is simply designing around the problem.

The Keep-Warm Pattern

The classic workaround: send dummy invocations on a schedule to keep containers from going cold. A CloudWatch Events rule triggers every minute, invoking your actual function with a synthetic keep-alive payload. Your handler checks for that payload and returns immediately if it’s not a real request.

It works. It’s inelegant—a scheduling hack dressed up as infrastructure. But the cost is effectively nothing. One invocation per minute is 43,200 monthly invocations, and Lambda’s free tier covers 1 million. You’re keeping containers warm for free.

I use this for functions with sporadic, unpredictable traffic gaps—API endpoints that might sit idle for hours then suddenly absorb a burst of requests. The keep-warm invocation guarantees at least one container stays ready. Cold starts still happen between warm pings, but their frequency drops dramatically. For some workloads, that’s enough.

Lambda@Edge for API Responses

CloudFront Lambda@Edge functions execute at edge locations close to your users. More importantly, they carry different cold start characteristics—faster initialization, globally distributed execution. That’s what makes Lambda@Edge endearing to us latency-obsessed practitioners.

Use Lambda@Edge for simple request transformation or response formatting. Keep heavier business logic in regional Lambda functions warmed by keep-alive patterns or Provisioned Concurrency. The edge function adds latency only on true cold starts, which become infrequent when your regional functions stay warm.

When to Use ECS or Fargate Instead

This is the honest conversation nobody wants to have: sometimes Lambda isn’t the right tool. If cold starts are genuinely killing your performance and you’ve exhausted optimization options, ECS Fargate might be the answer—and pretending otherwise costs your users real pain.

Fargate containers stay running. No cold starts. You pay for compute time whether the container is actively processing or sitting idle between requests. For high-traffic, latency-sensitive workloads, that idle cost earns its keep. For sporadic traffic, Lambda remains the better economics.

Frustrated by 300-millisecond cold starts adding unacceptable delay to time-critical alerts, one client I worked with moved their real-time notification processing to two Fargate tasks running continuously on t3.small instances. Sub-50-millisecond responses, no cold start variability. Monthly cost ran roughly $200 more than Lambda would have. Their SLA requirements made that math easy.

While you won’t need to run Fargate for every latency problem, you will need a clear framework for making the call. Lambda cold starts acceptable? Stay on Lambda. Cold starts unacceptable and optimization maxed out? Consider Fargate. Need both throughput and latency at scale? ECS with autoscaling. Need permanent idle readiness with no traffic? Fargate or always-on EC2—Lambda wasn’t built for that shape of workload.

AWS Lambda cold start optimization isn’t mystical. Measure first—find where cold starts actually occur and whether real users feel them. Then work in order: code-level optimizations first (free, often enough), architectural patterns second (cheaper, surprisingly effective), Provisioned Concurrency last (expensive but reliable). Most functions never need the expensive solution. Start cheap and work outward from there.

Leave a Reply